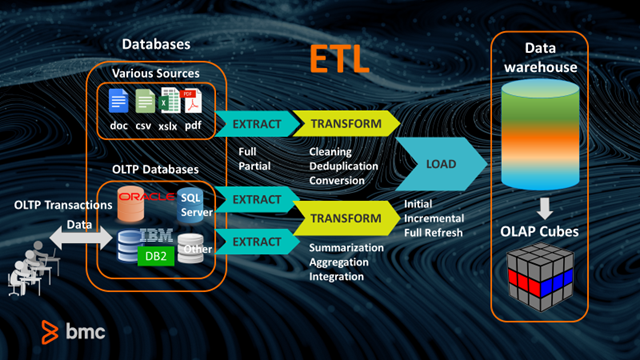

Some of the most important transformations are mapping data types from source to target systems, flattening semistructured data intended for a relational database, and data validation. Transformations fall into three general categories: validating, cleansing, and preparing data for analysis. Business requirements and the characteristics of the destination system determine what transformations are necessary. Transformation alters the structure, format, or values of the extracted data through different data transformation operations. Other potential sources include flat files such as HTML or log files.

The transactional systems may run on local servers or on SaaS platforms.

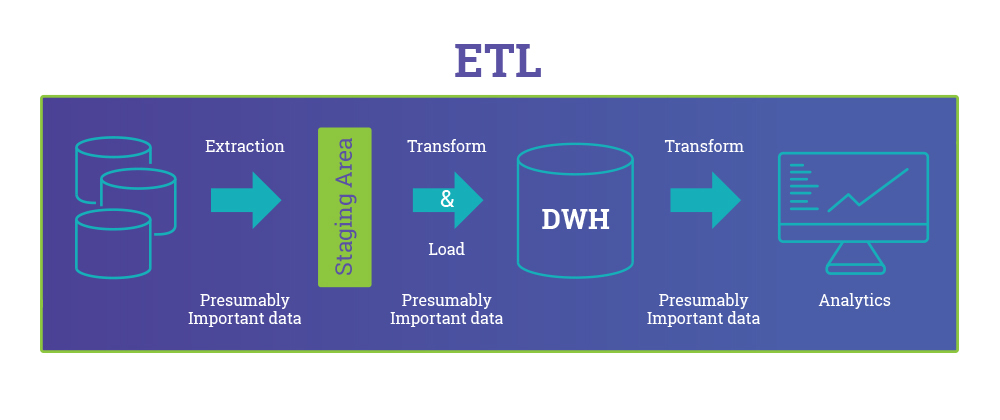

These online transaction processing (OLTP) systems are optimized for operational data defined by schemas and divided into tables, rows, and columns. Many enterprise data sources are transactional systems where the data is stored in relational databases that are designed for high throughput and frequent write and update operations. The extraction step focuses on collecting data. A data engineer may extract source data to a temporary location such as a data lake or a staging table in a database in anticipation of the steps that follow. Let's take a more detailed look at each step.Įxtraction involves accessing source systems and reading and copying the data they contain. It encompasses aspects of obtaining, processing, and transporting information so an enterprise can use it in applications, reporting, or analytics. What is ETL?ĮTL (extract, transform, load) is a general process for replicating data from source systems to target systems. Maintaining a data warehouse requires building a data ingestion process, and that in turn requires an understanding of ETL, its use cases, and its relationship with other components in the data analytics stack. Learn more about Extract, Transform, Load (ELT) and the difference between ELT and ETL.Understanding ETL (extract, transform, load)īig data and cloud data warehouses are helping modern organizations leverage business intelligence (BI) and analytics for decision-making and new insights. This is done to automate the process, reduce repetitive tasks and manage large amounts of data more efficiently.

Once data transformation is completed, data is loaded from the temporary staging area into the target data repository. Processing data often involves some of the following functions: Raw data is then transformed within the staging area. Data sources can include but are not limited to: This data is temporarily stored in a staging area. Raw structured or unstructured data is extracted either by being exported or copied from one or many data sources. ExtractĮxtraction is the first step in the ETL process. How ETL worksĭescribing each step of the extract, transform and load process is the best way to understand how ETL works. Organizations today use ETL for the same reasons: to clean and organize data for business insights and analytics.ĮTL is also used to describe the commercial software category that automates the three processes. It became a common method of data integration in the 1970s as a way for businesses to use data for business intelligence. Through the ETL process, data is properly formatted, normalized and loaded into these types of data storage systems to create a single, unified data view.Īn acronym for extract, transform, load, ETL is used as shorthand to describe the three stages of preparing data. ETL is a three-step data integration process that extracts, transforms, and loads raw data from a source or multiple sources to a data warehouse, data mart, data lake, or database.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed